When you build a streaming service that ships apps to Roku, Samsung Tizen, Google TV, LG webOS, iOS, Android, and web, the backend architecture determines how fast you can ship features, how well the system handles scale, and how much your infrastructure costs. The classic architecture question — microservices versus monolith — applies to streaming backends with specific constraints that make the answer less obvious than the industry default of “just use microservices.”

This guide walks through the real tradeoffs for OTT backend architecture, where service boundaries actually make sense, and when a monolith or modular monolith is the better starting point.

What makes streaming backends different

A streaming backend is not a typical web application backend. It has several characteristics that affect architecture decisions:

Asymmetric read/write ratio. Content is ingested and encoded once, but served millions of times. The read path (content catalog, manifests, playback sessions) must be extremely fast and scalable. The write path (content management, encoding pipeline, subscriber management) is lower volume.

Latency-sensitive playback path. The chain from manifest request → segment request → DRM license acquisition → playback start must be fast. Every millisecond of additional latency in the playback path is directly visible to the viewer as slower startup time. For low-latency live streaming, this is even more critical.

Platform-specific API requirements. Each device platform may need slightly different API responses: different image sizes for different screen resolutions, different manifest formats (HLS vs DASH), different metadata structures for platform-specific features like Google TV Watch Next integration.

Event-driven video pipeline. Encoding, packaging, and delivery are inherently asynchronous, event-driven workflows. A new piece of content triggers: ingest → analysis → encode → package → publish to CDN → update catalog. This pipeline is naturally decomposed into stages.

The monolith starting point

A monolithic backend for a streaming service contains all business logic in a single deployable unit: content catalog, user authentication, entitlements, playback session management, search, and recommendations.

Advantages for early-stage services

- Faster initial development. No service-to-service communication overhead. No distributed tracing. No API contract versioning between services. You can build and ship the first version faster.

- Simpler debugging. A bug in the playback flow is in one codebase. You can step through the entire request path in a single debugger session.

- Lower operational cost. One deployment pipeline, one monitoring setup, one set of infrastructure. For a small team launching an OTT service, the operational overhead of microservices can be prohibitive.

When the monolith breaks down

- Scaling asymmetry. If your catalog API handles 10x the traffic of your user management API, you want to scale them independently. In a monolith, you scale the entire application.

- Team scaling. When multiple teams work on the same codebase, merge conflicts, deployment coordination, and unclear ownership slow development.

- Technology constraints. The video encoding pipeline benefits from different technology choices (GPU instances, different languages) than the web-facing API.

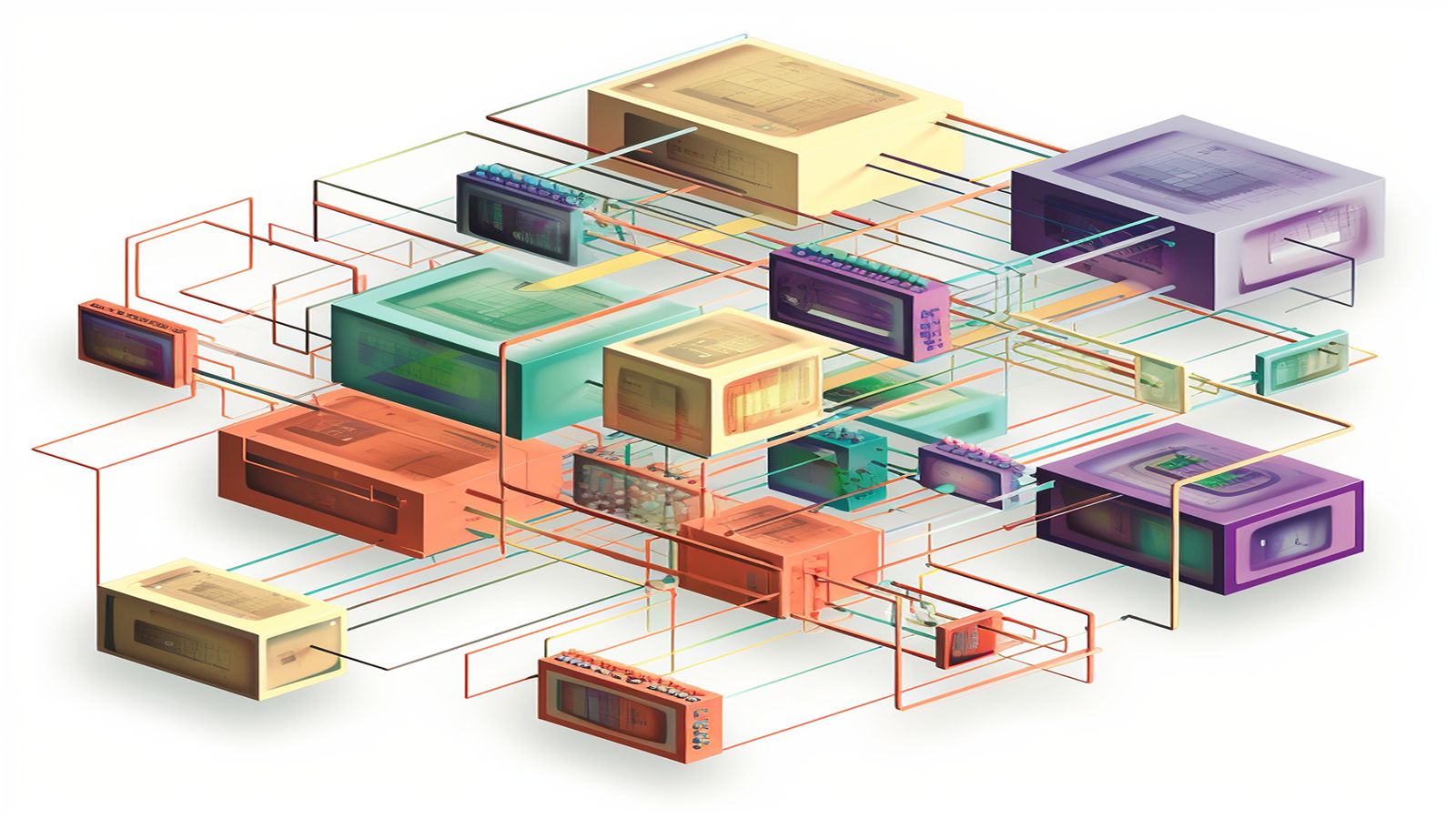

Microservices for streaming

Microservices decompose the backend into independently deployable services, each responsible for a bounded context. For a streaming service, natural service boundaries include:

Content catalog service

Manages metadata: titles, descriptions, images, availability windows, content ratings. Exposes a fast read API that clients query for browse screens, search results, and detail pages.

This service benefits from aggressive caching (content metadata changes infrequently), read replicas, and potentially a different data store optimised for read performance (Elasticsearch for search, a document store for catalog browsing).

Playback service

Handles the playback-critical path: given a content ID and user context, return the manifest URL, DRM configuration, and any playback parameters. This service must be fast, highly available, and as simple as possible.

The playback service is where DRM license proxy logic, entitlement checks, and geo-restriction enforcement live. Every additional dependency in this path is a risk to playback start time.

User and entitlement service

Manages user accounts, subscriptions, entitlements, and concurrent stream limits. This is a write-heavy service (user registration, subscription changes, heartbeats) with read-heavy queries (entitlement checks during playback).

Encoding pipeline service

Manages the video processing pipeline: ingest, analysis, encoding, packaging, and CDN publish. This is event-driven and benefits from queue-based architecture (SQS, Kafka, or similar). It runs on different infrastructure (GPU instances for encoding) than the web-facing API services.

Ad service

If your service includes advertising, the ad decisioning, creative conditioning, and SSAI manifest manipulation can be an independent service. This isolates ad-related complexity from the core playback path.

Analytics service

Collects and processes client-side telemetry: playback events, quality metrics, error reports, engagement data. This is a high-volume write path that should be decoupled from the playback-critical services.

The modular monolith: a pragmatic middle ground

A modular monolith keeps all services in a single deployable unit but enforces module boundaries within the codebase. Each module has a defined API surface and does not access other modules’ internal data directly.

This gives you:

- Clear boundaries for future decomposition into services

- Simpler operations than distributed microservices

- Shared infrastructure for database, caching, and deployment

- Easy refactoring when you discover your initial boundaries were wrong

For OTT services with small-to-medium engineering teams (3-15 developers), a modular monolith is often the right starting architecture. You can extract services later when scaling demands require it.

The first service to extract is usually the encoding pipeline (it runs on different infrastructure) and the analytics ingestion (it has fundamentally different scale and latency characteristics).

API gateway and platform abstraction

Whether you use microservices or a monolith, a platform abstraction layer is valuable for multi-platform OTT apps.

Backend-for-frontend (BFF) pattern

Each client platform (Roku, Samsung, Google TV, iOS, Android, web) has different capabilities, screen sizes, and API requirements. A BFF layer sits between the client and the backend services, translating generic backend responses into platform-specific formats.

Benefits:

- The Roku BFF can return BrightScript-friendly data structures

- The Samsung BFF can include Tizen-specific metadata

- The Google TV BFF can include Watch Next-formatted content for home screen integration

- Each BFF can optimise response sizes and image dimensions for its platform

API versioning

With multiple client platforms that update at different rates (a Roku channel update might take weeks for Roku certification, while a web update is instant), API versioning is essential. Each client version should be pinned to a specific API version, and the backend should support multiple API versions simultaneously.

Testing architecture

The choice between microservices and monolith affects testing strategy:

Monolith: integration testing is straightforward. The entire system is available in one process. End-to-end tests cover the full request path.

Microservices: each service has unit tests and integration tests in isolation. End-to-end tests require deploying all services or using contract testing (each service tests against the API contracts of its dependencies without running them).

For OTT services, end-to-end playback testing is non-negotiable regardless of architecture. A test that simulates: authenticate → browse → select content → start playback → verify video frames → stop playback must run against the complete system. See our guide on device fragmentation and testing for the device-side of this testing.

Practical recommendation

- Start with a modular monolith if your team is under 15 engineers and you are launching a new service. Ship faster and establish boundaries.

- Extract the encoding pipeline first — it has fundamentally different infrastructure requirements.

- Extract the analytics pipeline second — it has different scale and latency characteristics.

- Extract the playback service when playback reliability requires independent scaling and deployment.

- Use BFF pattern from the start to manage multi-platform API differences, regardless of backend architecture.

The architecture should serve the product and team, not the other way around. A well-structured monolith that ships features to viewers is better than a perfectly decomposed microservice architecture that is still in development. For foundational streaming architecture guidance, see our streaming app architecture solutions.